Canadian Freeze-Dried Food Companies 1 Apr 6:48 AM (4 days ago)

(This post is really short, but it was banned by the moderators on Reddit, so it's here)

I've been researching freeze-dried food companies for my upcoming bike-packing trip. Because of recent events, I want to be sure to select only brands that are Canadian. Here are some top freeze-dried food companies for Canadians.

Happy Yak

Based in Quebec, Happy Yak offers a diverse range of freeze-dried meals, including vegetarian and lactose-free options, emphasizing real ingredients and wholesome nutrition. https://happyyak.ca/

Pure Choice Foods

Located in Kanata, Ontario, Pure Choice Foods provides high-quality freeze-dried fruits, vegetables, meats, and treats, focusing on additive-free and gluten-free products. purechoicefoods.ca

Flat Out Feasts

This Canadian company specializes in keto-friendly freeze-dried meals, eliminating unnecessary carbohydrates to provide nutrient-dense options for outdoor enthusiasts. https://flatoutfeasts.ca/

Briden Solutions

Based in Calgary, Alberta, Briden Solutions offers a wide selection of freeze-dried and dehydrated foods suitable for camping and emergency preparedness. bridensolutions.ca

Context Awareness and Context Recognition in Modern Decision-Making - 2 12 Mar 12:27 PM (24 days ago)

Section Two: Context

In this section we look at the concept of context as it applies to both human and computer cognition. After laying out some perspectives of context and its role in decision-making, we identify and describe three major interpretations of context: as a schema, as a frame, and as a model.

Perspectives on Context

Context is “a complex description of shared knowledge about physical, social, historical, or other circumstances within which an action or an event occurs… (that) does not intervene explicitly in a problem solving but constrains it” (Brézillon, 2004).

Dey and Aboud (1999) define context as “any information that can be used to characterize the situation of an entity. An entity is a person, place, or object that is considered relevant to the interaction between a user and an application, including the user and applications themselves” (See also Aboud, Dey, et al., 2001; See Zainol and Nakata (2010, pp. 126-127) for additional definitions along the same lines).

Winograd (2001, p. 5) argues that context is defined by use rather than by features. “Context is an operational term: something is context because of the way it is used in interpretation, not due to its inherent properties.” (Winograd, 2001) He offers a communication and application programming architecture using a ‘blackboard’ metaphor that supports context-aware computing.

Sato (2003, p. 1324) argues that we should represent context through “a pattern of behavior or relations among variables that are outside of the subjects of design manipulation and potentially affect user behavior and system performance.” He describes a three-part strategy for context-sensitivity: sensing contextual changes, re-configurable architecture, and creating and managing contexts (p. 1327).

Dourish (2004) describes an incompatibility between two views of context.

One comes from positivist theory—context can be described independently of the actions done; the definition proposed by Dey matches this view.

Another view can be sustained by phenomenological theory—context emerges from the activity and cannot be described independently.

Guarino & Guizzardi (2015, 2016) offer an account of context as a ‘scene’ such that “events emerge from scenes as a result of a cognitive process that focuses on relationships: relationships are therefore the focus of events” (2016, p.2) and where ‘scenes’ are whatever occurs in a certain region of spacetime.

Types of Context

Some types of context, as described in the literature:

Activity, Identity, Location, and Time (AILT), (Dey and Aboud,1999)

Context as related to location, nearby person, hosts or objects, as well as changes of them over time (Schilit, et al. , 1995)

Context as tailored to the individual’s circumstances (Brown et al., 1997). For example, this “includes the capabilities of the mobile devices, the characteristics of the network connectivity and user specific information such as emotional state, attention focus, and orientation.

Context as behavioural. The Context-based Reasoning (CxBR) modelling paradigm asserts that context “contains the functionality to allow the agent to successfully ‘navigate’ through the current situation."

Context across levels of personal, project, group and organisation. It consists of people and their expertise, information sources, informational documents and the evaluation of their relevance, and relevant pragmatic documents (Snowden and Grasso, 2000).

Context as a spectrum of data described from a user perspective: computing context, user context, physical context, time context, and social context (Gu, 2009).

Context as related to capability and affordances. No reference for this was found, but the representation is a natural progression from the previous perspectives.

Context-Aware Decision Support

Computational context-aware decision support (CaDS) systems are evolving from ontology-based expert systems to attribute-based neural network systems. A (CaDS) system “consists of a situation model for shared situation awareness” (Feng, et al., 2009, p. 455).

Such systems are intended to address information overload, for example, in a Tactical Information Prioritization System (TIPS) (Marmelstein et al., 2008, p. 259). The aim of such research “is to enhance the decision-maker's perception, comprehension, and projection of the underlying knowledge space”(Hanratty, et al., 2009, p.1).

Dourish and Bellotti (1992, p. 107) state that awareness is an understanding of the activities of others, which provides a context for your own activity. “Awareness supposes that one is able to transform pieces of contextual knowledge into a proceduralized context at the current focus of attention” (Mäkelä et al., 2018, p. 7253).

To date, context-aware decision support systems have been designed along the lines of expert systems, employing "ontology-based decision support” and consisting of “sensor agents to detect raw-level data, a context management agent for handling context data, an information service agent, an operational decision support agent, and user agents for maintaining user information” (Song et al., 2010, p.1).

Contemporary approaches are studying the use of deep learning. Early work found “the intuition of equating the template attribute weights to neural network weights resulted in a good method to learn the weights directly from observation of prior agent behaviour” (Gonzalez, 2004, p. 169) supporting Context-based Reasoning (CxBR) as “a human behaviour modelling technique that uses this approach to model human behavior.”

Context Awareness and Recognition

‘Concept awareness’ denotes the capability or fact of being aware of context; by contrast, ‘context recognition’ describes the process or method of achieving context awareness.

Bricon-Souf and Newman (2007) describe context awareness as including "the ability... to detect, sense, interpret, act and respond to aspects of the environment, such as location, time, temperature or user identity."

We could say it is the ability to examine the environment and react to the dynamical changes such as the location of user, the collection of nearby people, hosts, and accessible devices, and adapt their behavior based on the context of the application and the environment.

We see similar definitions of 'context awareness' applied to both human and computer applications. Dey (1999) for example writes “A system is context-aware if it uses context to provide relevant information and/or services to the user, where relevancy depends on the user's task.”

A variety of context recognition mechanisms may be employed. For example, in a survey of research on context recognition in surgery, Pernek and Ferscha (2017) identify the following:

Environment tracking - use of “pluggable monitors and devices that can be connected and used to infer surgical workflow” (p. 1722)

Kinematic tracking - “focused on tracking the location of surgical instruments or quantifying hand movements of surgeons” (p. 1723)

Video tracking - “recognize actions from intra-body video images” (p. 1723)

Cognitive state context - through eye-gaze, skin response, heart-rate, force (p. 1724ff)

In computer applications, the predominant mechanisms employed were machine learning or neural network-based pattern recognition algorithms. For example, Radu et al. (2018) study “the benefits of adopting deep learning algorithms for interpreting user activity and context as captured by multi-sensor systems” (p. 157.2). Similarly, Billones et al. (2018) discuss the use of deep learning for vehicular context recognition. Alajaji et al. (2020) “propose DeepContext, a deep learning based network architecture for recognizing a smartphone user's current context.”

Context as schema

The general sense of a schema is as a semantic representation consisting of a form of representation as a generalisation combined with elements or blank spaces that are filled by concrete particulars to constitute an instance of the schema (Bartlett, 1932, Corcoran and Hamid, 2016).

Context may be thought of as “a mental codification of experience that includes a particular organised way of perceiving cognitively and responding to a complex situation or set of stimuli” (Merriam-Webster, 2024). There are senses of ‘schema’ in logic, psychology, computer science. Schemas may be thought variously as:

consisting of i) set of words and blanks, ii) mechanism for filling blanks, or

consisting of i) individual concepts, ii) mechanisms to connect concepts (Gal'perin, 1989, p. 65)

consisting of scripts (Schank and Abelson, 1977)

Schemas are recognized to be constantly changing. Bartlett’s “concept of schema emphasises the dynamic and evolving nature of these cognitive constructs, which continuously adapt as we encounter new information” (Main, 2023).

Schema Development

Depending on the discipline or perspective, schema development may be described as ‘orientation’, ‘view’, or ‘case-based’.

‘Orientation’ is one of the steps in the OODA loop, discussed above. Orientation is depicted as “a schema to elucidate the role of human cognition (perception, emotion, and heuristics) in defense planning in a non-linear world characterized by complexity, novelty, and uncertainty” (Johnson, 2023, p. 43).

In IT and database development for systems such as JBI-IM (discussed above), context is represented as the development of various ‘views’ representing various ways to display underlying data schemas.

Schema Activation

Schema ‘activation’ is the deployment or retrieval of a schema to be applied or descriptive of a particular situation, and is often depicted as a cognitive process. For example: “Activating schemata and training students to use reading strategies are both generally effective in reading comprehension skills” (Cho and Hyun, 2020, p. 49). “Through schema activation, judgments are formed based on internal assumptions (bias) in addition to information actually available in the environment” (Worthy et al., 2024).

Schema Change

Schemas may change either through accommodation or assimilation of new data through either a top-down or bottom-up process.

For example, in the Composition Modeling Framework (CMF) (Staskevich et al., 2007), "when existing schemas change on the basis of new information, we call the process accommodation. In other cases, however, we engage in assimilation, a process in which our existing knowledge influences new conflicting information to better fit with our existing knowledge, thus reduc(ing) the likelihood of schema change” (Worthy et al., 2024).

Context as frame

A ‘frame’ is most generally thought of as an organisation of experience (Goffman, 1974) and in this sense more of a cognitive or psychological construct than semantic. It is an interpretation of reality “that puts the facts or events referred to in a certain perspective” (Morasso, 2012, p. 5).

From a more computational perspective, Minky’s (1974) account is an elaboration of the schema. “Here is the essence of the theory,” writes Minsky. “When one encounters a new situation (or makes a substantial change in one's view of the present problem) one selects from memory a structure called a Frame. This is a remembered framework to be adapted to fit reality by changing details as necessary.”

Similarly, in their consideration of choice theory in uncertain conditions, Tversky and Kahneman argue that “the normative and the descriptive analyses of choice should be viewed as separate enterprises” (1986, p. s275) with framing describing the former (for example, where someone is risk-tolerant or risk-averse).

Lakoff (2010, p. 71) describes frames as “structures (that) are physically realized in neural circuits in the brain. All of our knowledge makes use of frames, and every word is defined through the frames it neurally activates.”

Examples

The concept of a frame is at once less formal and more detailed than the schema, and consists not only of a generalised description of a situation or collection of data, but also objectives, expectations or values. These are illustrated with the following examples:

“The common good” in diplomacy in military affairs (Karadag, 2017).

“the frame of arms control” in distribution of verification resources (Avenhaus and Canty, 2011).

“Luttes de sens, cadrages et grammaire lexicale en contexte révolutionnaire” (Struggles for meaning, framing and lexical grammar in a revolutionary context) (Rey, 2020).

“Framing war: Public opinion and decision-making in comparative perspective” - “uses the recent war on Iraq as a case study, focusing on the elite and media framing of this event in order to examine the interaction between the political elite and the mass public” (Olmastroni, 2014).

“the analysis of the strategic culture of Hungary is approached from a perspective that will frame the cultural and ahistorical view of the international policy of the neorealists with its own cultural and historical dimension” (Jeremić, 2021, p. 51).

“identifying four related concepts that help frame how a COP may improve an organization’s efficiency and effectiveness: effectiveness-based measures, decision rights,schwerpunkt, and neutral integrators” (Pyles et al., 2008, p. 5).

Frame Vs Framework

A frame should be distinguished from the related but distinct concept of the ‘framework’. The latter is not a cognitive or psychological construct, but rather a method or process designed to explain, guide or improve decision-making (for example, Elgoff and Smeets, 2023, p. 502). In this context, a framework is best viewed as a decision-making or design tool (see ‘Decision-Making, above).

Context in metaphor

Metaphor is a powerful instrument for creating and representing frames in cases where literal representation is insufficient.

“The concepts that govern our thought are not just matters of intellect,” writes Lakoff (1980, p. 3). The metaphor ‘argument is war,’ for example, “is one that we live by in this culture; it structures the actions we perform in arguing.” Similarly, Taylor (2008, p. iii) writes, “The conception of literal meaning adopted by both semantic and pragmatic metaphor theorists, which roughly indicates an adherence to a lexical authority and conventionally accepted grammar, is far too limited in scope to account for what is generally taken to include literal meaning in the use of language.”

Metaphor may be thought of “as an eminently cultural linguistic phenomenon”, however, “There are several different ways of thinking about the nature of context in metaphor production that is not necessarily cultural” (Kövecses, 2017, p. 307).

Metaphors both define and are defined by context. “The purpose of metaphorical framing is to convey an abstract or complex idea in easier-to-comprehend terms by mapping characteristics of an abstract or complex source onto characteristics of a simpler or concrete target” (Wikipedia, 2024). It “tends to illuminate certain aspects while obscuring others” (Norscini and Daniela, 2024, p. 14). Thus a complex phenomenon is rendered more concrete.

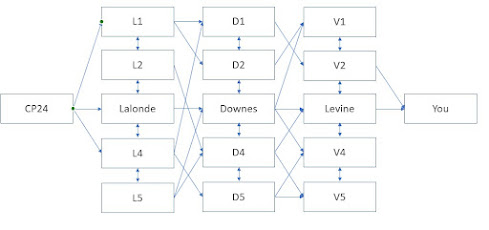

Context as model

Context as a model is predominantly found in the form of a ‘context model’. “Context models are used to illustrate the operational context of a system - they show what lies outside the system boundaries” (Kurkovsky, 2024; Sommerville, 2015, Chapter 5).

In an ontology, a context model helps define a subject using a semantic analysis of information related to the subject. Wang et al. (2004, pp. 18-19) describe several informal context modelling approaches and present a formal context ontology. A software system context model “explicitly depicts the boundary between the software system and its external environment” (Johnston, 2021). A physical system context model may define an environment for a software simulation, for example, digital twin (Sahlab et al., 2022, p. 463).

Large language models (LLM) also have mechanisms to define context. For example, a ‘context window’ defines the request space for an LLM. A recently released version of Google Gemini defines a 1 million token context window that allows it “to understand up to one hour of video, 11 hours of audio, over 700,000 words (so it could read, digest and answer questions about Tolstoy's War & Peace) or over 30,000 lines of code” (Pichai, 2024).

Today, model context protocols (MCP) are used by generative AI systems such as Claude as a mechanism connecting them to underlying systems and information such as graphs and databases on local filesystems or accessible in the cloud (Anthropic, 2024).

Types of Model

It is beyond the scope of this review to identify and define the full scope of models and model technology; the typologies offered below provide a sense of this scope with respect to context.

Process Models:

Mathematic - for example, “mathematical models of combat activities and combat means of destructions, and their development paths of the use of troops in the process of preparation” (Mikayilov and Bayramov, 2019, p. 156)

Deterministic - for example, to define ship speed optimisation from the perspective of cash flow (Beullens et al., 2023).

Stochastic - for example, decision support in a dynamic environment such as a wildfire (Roozbeh et al., 2021).

Forces – for example, Porter’s Five Forces (Porter, 1979).

Neural Network Model - a simplified model of the operations of a human brain involving creation and activation of patterns of connection (IBM, 2021).

Business Models:

Logic Model - a mechanism for describing inputs and outputs for translation of business data into outcomes or benefits. For example, Paul et al. (2015, p. 17).

Mental Model - “ an overarching term for any sort of concept, framework, or worldview that you carry around in your mind.” Clear (2024) provides a pretty good list. Boyd (1973) describes forms of mental models as patterns or concepts in his description of OODA (described above).

Case - a specific business-focused description of a business situation, either for study after the fat or that “puts a proposed investment decision into a strategic context and provides the information necessary to make an informed decision” (TBS, 2009).

Computational Models:

Data Model - a representation of data structures, may be a schema (see above) or ontology, depicted textually (XML, JSON) or as a diagram (Entity Relationship (ER) or Universal Modelling Language (UML)). See for example ‘context data model’ (Ceri et al., 2007) or ‘contextual design model’ (Holtzblatt and Beyer, 2017).

Simulation - for example, “The use of modeling and simulation offer a better understanding of the concepts and solutions for commander's decision making” (Cîrciu et al., 2010, p. 93; Connable, et al., 2014).

Multi-Objective Optimization - for example, a multi-objective model for hub location and cost sharing (Mrabti et al., 2022).

Validation

Models are intended to serve as representations of processes, data or physical environments. As such, unlike schemas or frames, models have a unique requirement of validation. The following terminology is employed:

Verification: The process of determining that a model implementation and its associated data accurately represent the developer’s conceptual description and specifications.

Validation: The process of determining the degree to which a model and its associated data provide an accurate representation of the real world from the perspective of the intended uses of the model.

Accreditation: The official certification that a model, simulation, or federation of models and simulations and its associated data is acceptable for use for a specific purpose. (All quoted from AcqNotes, 2024; DoD 2008; Owen, and Chakrabortty, 2022).

In a wider context, other criteria and terminology may be used to evaluate models, for example, model fit and measurement invariance (Goldammer et al., 2024). Similarly, an ‘inference to the best explanation’ model minimally consists of the following:

Abduction: The generation of candidate hypotheses and theories.

Epistemic value: One or more epistemic values that order theories.

Theory evaluation: An aggregation operation that takes orderings of theories and yields an overall ordering (Quoted from Rast, 2023, p. 3).

Additionally, theory evaluation may consider ‘epistemic virtues’ such as simplicity, paucity, or commensurability.

Summary

In this section we considered the nature and attributes of context as it in forms human and computational cognition, and in particular, expanded upon three major interpretations of context: as a schema, as a frame, and as a model.

It is not clear that any individual interpretation of context offers a comprehensive understanding of decision-making as referenced in section 1. The three interpretations of context are themselves contextual in nature, offering a mixture of mechanism and metaphor in an effort to convey an intuitive understanding of the subject.

In the next section, we will examine the role of data in the decision-making process generally and offer a broader decision-making model that explicitly incorporates contextual factors.

--

Image source: Figma https://www.figma.com/resource-library/context-diagram/

This article is based on work completed for Defence Research and Development Canada, Contract Report DRDC-RDDC-2025-C035

References will be listed after the series is complete.

Context Awareness and Context Recognition in Modern Decision-Making - 1 12 Mar 12:25 PM (24 days ago)

Section One: Modern Decision-Making

Introduction

Today's decision-making process takes place in an increasingly dynamic and complex environment. Today’s decision-making domains include not only the traditional physical environments but also digital age domains such as cyber and information. The speed at which the environment changes has increased and decision-makers require new ways to adapt to changing contexts in which they create change and fulfill intents.

This report presents the results of a scoping study in which the changing dynamics of context recognition and awareness are mapped and a decision-making model based on this mapping is outlined. It is divided into four main sections.

- The first section, which you are reading, surveys the concepts of context awareness and context recognition, outlines theories of modern decision-making, and concludes with a discussion of situation recognition and awareness.

- In the second section, context recognition is described as a process of representing complex environments as characteristic schemas, frames or models, and identifies the roles these abstractions play in decision-making in terms of data, information and capability.

- The third section presents research results based on both a literature review and interviews with Canadian Forces command personnel. A circular graph-based task model is proposed depicting the multiple simultaneous influences of task domains on each other, as an effect of context, and as a basis for context recognition.

- Finally, the fourth section suggests avenues of future research that may expand our understanding of context recognition and identify how the use of digital technologies such as artificial intelligence may assist decision-makers in context recognition and decision-making tasks.

Literature Review

This review identifies relevant materials in the federal science database, focusing on key terms within the field, in addition to related work found through expanded-search techniques on the World Wide Web. The review was conducted within the context of the pre-defined concepts of ‘command and control’, but may be applied to wider contexts.

The review followed considerations related to definitions of ‘context’ and subthemes such as ‘context awareness’ and ‘context recognition’, which depict ‘context’ as a type of generalisation that can lead to appropriate response. The review identified three major interpretations: context as schema, context as frame, and context as model.

- A schema is a semantical framework that helps individuals organise, process, and store information about their environment. It can be thought of as a semantic concept composed of two major parts: significant words or symbols interspersed with blank-space placeholders; and a method or condition specifying how the placeholders are to be filled to obtain instances.

- A frame is a psychological attitude defining a set of background assumptions and expectations. (Lakoff)

- A model is a scientific or computational construction defining an environment, process or system. Models may be deterministic, stochastic, or probabilistic. Types of model include a logic model, mental model, simulation, or multi-objective optimization.

Defining Context

Context may be defined as “any information that can be used to characterise the situation of entities” The term ‘context’ is frequently used in conjunction with terms related to capability or capacity, such as ‘context recognition’ or ‘context awareness’.

Context Awareness is the “current characterization (as described by pattern, scenario, type or template) of the situation of entities”, ie., “the ability to detect, sense, interpret, act and respond to relevant aspects of the environment, such as location, time, temperature or user identity,” where relevance is described by the current task or set of objectives, that could enable a prediction of actor intentions or future events.

Context Recognition is “recognition of a previously characterised context (as described by pattern, scenario, type or template)”, including possible interpretations, actions and responses, supporting a prediction of actor intentions or future events

To contrast context and situation, we say that a ‘situation;’ is the state of affairs in the environment relevant to a decision or an action, while by contrast we say a ‘context’ is a type of generalisation that can be inferred from the situation that is in turn associated with specific decisions or actions. For example, the situation ma be that orange and black stripes are present in a canopy of green, while the context is that you are near a tiger.

In this discussion, context is thought of as a range of possible situation classifications such that we are able to classify the situation as, eg. class 1 or class 2. There may be different context sets addressing various perspectives of context.

The Decision-Making Environment

Decision-making itself is a structured process, following a logical progression through:

- The identification and analysis of a problem;

- The development of options for solutions to the problem; and

- The translation of conceptual options into a plan that can be executed (CACSC, 2018).

Decision making is anticipated to occur in an extremely complex and interactive future environment (such that) future operating environments will require a real time, fully networked... capability” to support “integration and synchronisation of actions”. (Tucholski, 2021 5; JP 3-0, 2022). The eventual outcome will be a cloud-based decision-making environment that incorporates a large number of "relevant data feeds as well as artificial intelligence and machine learning to enable decision-makers to maintain detailed situational awareness of the environment.” (Gordon, 2023; Cliche, 2024).

Modern Decision Science

The focus of modern decision science “is on building a framework capable to offer an effective tool for decisions in the field of force planning and operations planning” (Yuan and Singer, 2021), which requires a capacity to respond to dynamic and changing environments.

Classic decision-making science approaches environments as systems to which qualitative methods such as scenario spinning, operational gaming, or Delphi techniques, may be applied (Davis et al., 2005, p. 33). However, in the face of increasingly complex environments, “Instead of seeking to “predict” effects on a system of various alternatives and then ‘optimizing’ choice, it may be far better to recognize that meaningful prediction is often just not in the cards and that we should instead be seeking strategies that are flexible, adaptive, and robust” (Ibid., p.46).

Some modern decision-making approaches found in the literature follow. Each of them highlights the role of context in decision making in a modern environment.

OODA Loop: John Boyd's observation-orientation-decision-action metaphorical decision-making cycle (or "OODA loop") is used, for example, to make fast and accurate decisions (Maccuish,2012, p. 67). “Because they’re developed and tested in the relentless laboratory of conflict, military mental models have practical applications far beyond their original context.” In the OODA model, context plays a key role in the ‘orientation’ stage.

Orientation “involves assessing the relevance and significance of the data, understanding how it fits into the larger context, and identifying potential opportunities or threats” (Wale, 2024). The OODA loop is recognizable in the Canadian Forces Operational Planning Process (OPP), which recognizes five stages: initiation, orientation, course of action development, plan development, and plan review (CACSC, 2018, pp. 11-16). The OPP is informed by descriptions of other actors, terrain, structures, capabilities, organizations, people, and events. (ASCOPE) (CACSC, 2018, p. 18).

Intent Model: David Marquet's intent-based leadership (IBL) model “is not based on the flow of power from one individual to another as in the leader-follower model, but is instead based on a goal, or intent, shared between individuals. By analogy, the leader-follower model is similar to command and control, but the IBL model is similar to mission command” (Fernandez-Salvador, 2017).

While IBL is most often discussed from a leadership perspective, as a training model it develops a learner’s sense of context. “With IBL, learners gain experience in making sense of a problem. As they develop the solution to a problem, the problem begins to make sense, and learners begin to problem solve and adapt” (Duffy and Raymer, 2010, p.v).

Joint Decision-Making: Complex environments often require multiple organizations and branches and hence entail joint decision-making. The joint decision-making model builds on the OODA to create constructs like joint operational planning and joint information and intelliggence preparation in order to enable a systems understanding of an information environment (Sylvestre, 2022, p. 14).

Robust Decision-Making (RDM): Robust decision making (RDM) “is a quantitative, decision support methodology designed to inform decisions under conditions of deep uncertainty and complexity (to) help defense planners make plans more robust to a wide range of hard-to-predict futures” (Lempert et al., 2016, p. 2).

In contrast to “agree-on-assumptions” (Kalra et al. 2014) or “predict-then-act” approaches to decision-making, RDM takes an “‘agree-on-decisions’ approach, which inverts these steps,” using “models and data to stress test the strategies over a wide range of plausible paths into the future.”

Decision Making under Deep Uncertainty (DMDU): In cases of deep uncertainty there is not agreement on how the system works nor what future outcomes may be. Accordingly, as (Kwakkel and Haasnoot, 2019, p. 357) argue, various representations or models may apply. Scenario thinking, exploratory modelling and adaptive thinking are methods of preparing for alternative situation types. DMDU proposes a taxonomy of such methods (of which RDM, above, is one) that apply in different cases.

Situation Recognition

Modern decision theory requires situation awareness (SA) in order to comprehend which, if any, representation or model may apply. “Determining exactly what constitutes SA is a very difficult task, given the complexity of the construct itself, and the many different processes involved with its acquisition and maintenance” (Banbury and Trembley, 2004, p. Xiii). Moreover, "...models of SA refer to cognitive processes in general terms, but do not specify exactly what processes are involved and to what extent" (Ibid).

An understanding of the mechanisms of arriving at situation awareness, here called ‘situation recognition’, is required. "The test of situation awareness as a construct will be in its ability to be operationalized in terms of objective, clearly specified independent (stimulus manipulation) and dependent (response difference) variables ... Otherwise, SA will be yet another buzzword to cloak scientists' ignorance” (Flach, 1995, p. 155).

In computer vision, “‘situation recognition’ is the task of recognizing the activity happening in an image, the actors and objects involved in this activity, and the roles they play. Semantic roles describe how objects in the image participate in the activity described by the verb” (Pratt et al., 2020, p.2).

This involves (per Wikipedia):

- “the perception of the elements in the environment within a volume of time and space, the comprehension of their meaning, and the projection of their status in the near future”. Endsley (1995b).

- An alternative definition is that situation awareness is adaptive, externally-directed consciousness that has as its products knowledge about a dynamic task environment and directed action within that environment.

According to Endsley (Ibid.) expert decision-makers act first to classify and understand a situation, then proceed to action selection, for example, matching to prototypical situations in memory: Dreyfus (1981) experts, deGroot (1965) chess, Mintzberg (1973) management, Kuhn (1970) science. (p 34). This process comprehends three major approaches developed in the years following Endsley’s work.

- Schemata, which are coherent frameworks for understanding information (information is lost but becomes more coherent and understandable). Scripts (a special type of schema) provide sequences of appropriate actions

- Mental models, which may be “mechanisms whereby humans are able to generate descriptions of system purpose and form” (Rouse and Morris, 1985, p. 356). Experts shift from representation to abstract codes (Ibid), for example, the situation model “provides a mechanism for the single-step ‘recognition primed’ decision-making” (Endsley, 1995b, p. 43)

- Development, whereby schemata and mental models are developed as a function of training and experience in a given environment - Holland, Holyoak, Nisbett and Thagard (1986).

As seen through the examples below, more recent models are based on graph analysis. “Existing situational awareness systems use prebuilt situational knowledge-based symbolic reasoning, making it very difficult to infer situational knowledge building or unexpected situations in complex, time-space dynamic environments such as battlefields” (Lee et al., 2023, p. 6057).

Examples of Situation Recognition Models

Following are a few examples of situation models drawn from the literature:

- Grounded Situation Recognition, developed by AllenAI, is “a task that requires producing structured semantic summaries of images describing: the primary activity, entities engaged in the activity with their roles (e.g. agent, tool), and bounding-box groundings of entities” (Pratt et. al., 2020, p. 1).

- Chmielewski and Sobolewski, (2019, p. 38) describe situation recognition as a “consistent data flow process, consisting of: data generation, data integration and filtration, data visualization, and knowledge acquisition and reasoning.”

- A situation awareness model with human-machine collaboration proposed by Meng et al. (2022, p. 1443) is comprised of a human cognitive part that includes situation perception and a machine part machine including situation recognition, where situation recognition “mainly corresponds to human's situation perception part, which is an intuitive analysis of the current situation from objective data in reconnaissance intelligence, trend intelligence and other data (p. 1444).

- Baek et al. (2022, p. 308) describe a distributed graph matching network “to classify multiple agents based on their graph semantic information” and a “hypergraph to analyze high-order relationship between agents.”

- Lee et al. (2023, p. 6041) describe an architecture based on multi‑modal data and graph neural network (p. 6042) comprising four key parts: multi-agent based manned-unmanned collaboration architecture, robust tactical map fusion technology, hypergraph based representation learning, and space-time multi layer model. “The proposed model provides collaborative intelligence-based real-time battlefield situation recognition technologies” (p. 6066).

Summary

Modern decision-making has evolved from a simple process, described by the OODA loop, to a complex process that involves the development and application of models based on prior knowledge, scenario building, and situation recognition.

This has shifted the emphasis in decision-making from being one in which being informed and aware is sufficient to one in which considerable pre-planning, including especially model-building, is required. The task of developing and applying such models is often a joint one, involving as well the development of collaborative processes and information networks.

--

Image source: Strategy Punk https://www.strategypunk.com/navigating-the-ooda-loop-mastering-adaptive-decision-making-in-complex-situations/

This article is based on work completed for Defence Research and Development Canada, Contract Report DRDC-RDDC-2025-C035

References will be listed after the series is complete.

Grappa-Ling With Mark Carney (2) 28 Feb 7:05 AM (last month)

Although he tries to add some anecdotes, the first four chapters of Carney's book read like an economic text, covering as they do the histories of value theory and of money. There are no great revelations here, though it feels like the chapters are a subtle push-back against people who still believe in mercantilism or the gold standard.

The chapters also feel like they are addressing the absolutism that characterizes a lot of political thought rooted in (some of the) principles of economics. I want to highlight a few of these that are relevant to me.

First is the distinction between what we might call 'productive' and 'unproductive' economic activity. Carney makes the point, with which I agree, that this can be pretty arbitrary. To begin, we have two sets of factors that do not contribute to the 'value' of an object:

- rent, and rent-seeking activities

- profit, and profit-seeking abilities

There are also activities that are deemed 'non-productive' because

- they do not result in economic activity or commercial sales

- they are performed by people who are not paid to perform them

Value today is determined not by intrinsic worth but rather by subjective assessment (including the famous laws of supply and demand) as mediated by a range of other factors. Carney points to "widespread ignorance of both (this approach's) limitations and its impacts:

- market failures - prices become skewed in cases of over-abundance and extreme scarcity

- human frailties - including a lack of full knowledge and non-rational decision-making

- the welfare of nations - including the failure to value essential social services

- the theory of market sentiments - where profit and rent-seeking are valued

The danger of market economics is that it is a self-fulfilling prophecy: if that which is not in the market is not valued, then there is a tendency to bring everything that is valued into the marketplace. But the marketplace has one determinant of value: price. And as Carney says, "effective market functioning requires other sentiments, such as trust, fairness and integrity."

He makes essentially the same point in his discussion of money, reaching much the same conclusion from another direction. Money, like value, has undergone a transition over time from being backed by assets (such as land or gold) to being backed by a set of institutions, and most notably banks. This wasn't because gold is not valuable, but because being locked into a gold standard "would ultimately fail because its values were not consistent with those of society. It prioritised international solidarity over domestic solidarity."

Quite simply (and this is a bit of an overstatement) the ability to govern required the consent of the governed, illustrated by Carney through an extended discussion of the Magna Carta. "In order to function within a democracy, the authority of independent bodies must be constrained, allowing them to do only that which is necessary to pursue specific objectives, and they must be accountable to the people for their performance." (p. 77)

This leads to a discussion of the evolving role of banks, and in particular, of central banks, in preserving the value of money. They do this by fixing interest rates to regulate the cost (and therefore supply) of money, and (more recently) regulating and backing the stability of banks. This authority was historically dedicated to fighting inflation at all costs, but over time, has come to include a mandate to consider the economic health of the country more generally.

There is to my mind far too much talk of 'tough decisions' in chapter four. These decisions aren't 'tough' at all for the people who are making them, unaffected as they are by unemployment, low wages, and increasing poverty. The battle against inflation represents first and foremost the interest of those with money, and protecting the value of that money isn't 'tough' at all for them. The actual 'tough' decisions they face are the decisions that promote the welfare of the people even when it harms the interests of those with money.

In any case, Carney draws some important lessons from all of this:

- the importance of flexibility in inflation targeting - the bank can't control everything, but it can control the balance of the paid caused by, say, Brexit, between workers and the wealthy

- a focus on price stability can become a dangerous distraction from other fundamentals, such as regulating banks

- "trust can be undermined not just through a loss of certainty about the future value of money, but also through a loss of confidence in banks or even a loss of faith in the financial system itself" (p. 85)

These lessons in turn lead to his overall conclusion: "The value of money and the legitimacy of the Bank come from people’s trust and their belief in the fairness and integrity of the system... That is a clue to what gives money its value: resilience, solidarity, transparency, accountability and trust." (pp. 86-88)

Honestly, this is a conclusion I really want to believe. It has all the elements of a foundation for liberal democracy. I'm just not sure it can be sustained.

What we really see through these first four chapters is complex of three major forces:

- nature - the intrinsic value of things

- authority - the influence of wealth and power

- values - the conditions required for consent.

None of these is going away; the events of the last decade should make that clear.

Human needs (and human suffering) show us that some things are vital no matter what the price (including especially the basics of human sustenance). Even if labour is worth nothing, people will fight to get what they need to survive. And this is exacerbated by forces beyond our control as individuals, such as resource depletion and climate change.

Wealth and power are also not going away. We still see in some countries a willingness to invade others. And we see the influence of the wealthy over public policy and government. Rent-seeking and profit drain the resources of the poor, pushing them towards subsistence-level existence.

The need for the consent of the governed is probably the least reliable in the current age. Carney points to "resilience, solidarity, transparency, accountability and trust," but he will have to come to grips with the fact that, as Chomsky argues, these can be 'manufactured' through propaganda, deception and misinformation, rather than earned. And even if we are in the business of earning trust, it's not clear that these are the values that inform society.

I always keep in mind the most salient argument in Patrick Watson's The Struggle for Democracy - the willingness to debate and reason in good faith about the governance of a society depends on the existence of a certain level of prosperity in that society. The same is true of values. Some values - most values, even - don't survive poverty. What we really believe is ethical not always what we describe in our ethical theories.

Grappa-Ling With Mark Carney (1) 21 Feb 7:14 AM (last month)

I've been reading Mark Carney's book Value(s). It seems a reasonable read given his new place in Canadian political affairs. It's a serious book by a serious thinker, which I must say is a refreshing change in a landscape dominated by demagogues. Will I support everything he says? No. But the thinking here is well worth engaging.

Here (I say after having read a chapter and a half) is the core argument: value is based on values.

He sets this up with an argument offered by Pope Francis:

Our meal will be accompanied by wine. Now, wine is many things. It has a bouquet, colour and richness of taste that all complement the food. It has alcohol that can enliven the mind. Wine enriches all our senses. At the end of our feast, we will have grappa. Grappa is one thing: alcohol. Grappa is wine distilled.

Humanity is many things – passionate, curious, rational, altruistic, creative, self-interested. But the market is one thing: self-interested. The market is humanity distilled. And then he challenged us: Your job is to turn the grappa back into wine, to turn the market back into humanity. This isn’t theology. This is reality. This is the truth.

Now I like this sentiment for many reasons. For one thing, it sets his values - and mine, and apparently Pope Francis's - apart from the sort of fundamentalism that reduces everything worthy in humanity to a single calculation based on self-interest. A lot follows from that.

And in particular, this calculation pushes back against a dominant contemporary theory of value: that value is based on price. This is important because it means that price isn't based on value, it is a relative and fluctuating measure based on willingness to pay, as determined by the (value-free) free market. A lot follows from this.

For example, in my own world, we have to choose between which research projects we pursue and which we don't, since we have limited time and resources. Where I work, the dominant metric has sometimes been whether there is a private sector company willing to pay for the work. Without commercial partners, the work will not proceed. This one fact has delayed my own work on personal learning environments by decades, because there's no market demand for a free product that makes access to education free.

But if values determine value, what are those values? Carney takes a stab at it in the first chapter:

The experience of the three crises suggests that the common values and beliefs that underpin a successful economy are:

- dynamism to help create solutions and channel human creativity;

- resilience to make it easier to bounce back from shocks while protecting the most vulnerable in society;

- sustainability with long-term perspectives that align incentives across generations;

- fairness, particularly in markets to sustain their legitimacy;

- responsibility so that individuals feel accountable for their actions;

- solidarity whereby citizens recognise their obligations to each other and share a sense of community and society; and

- humility to recognise the limits of our knowledge, understanding and power so that we act as custodians seeking to improve the common good.

Now if I were to select a set of basic values that underpin everything I do, these wouldn't be the values I would choose. That doesn't make them bad values, by any means, and a politician could do a lot worse than this (examples aren't hard to find). But I don't really think this set of values suits the purpose to which they're being applied.

To my mind, there are two (not necessarily conflicting) sets of values at work that we need to consider: first, those describing the mechanisms that will underpin a successful society (and not just a successful economy, though Carney sometimes conflates the two); and second, those describing the purpose or reason we want a successful society in the first place.

Carney's list blends the two. We see measures that make the economy (society) work better, such as fairness, responsibility and solidarity; and we see measures that speak to the purpose of an economy (society): creativity, protecting the vulnerable, improving the common good.

Moreover, when looking at those that describe the purpose of an economy (society), Carney is focused almost exclusively on a broader social purpose. Not that there's anything wrong with that, but I don't think we begin with a social purpose; rather, a social purpose (just as a social knowledge) emerges from the very real concrete practical day-to-day purposes of individuals.

I think Carney knows this, if we are to judge by his account of Adam Smith in the next chapter. Here's an except from his summary:

The central concept that links all of Smith’s works is the idea that continuous exchange forms part of all human interactions. This is not just the exchange of goods and services in markets, of meanings in language and of regard and esteem in the formation of moral and social norms. Humans are social animals who form themselves in action and interaction with each other across all spheres of their existence.

Smith’s goal in writing The Theory of Moral Sentiments was to explain the source of humankind’s ability to form moral judgements, given that people begin life with no moral sentiments. He believed that we form our norms (values) as a matter of social psychology by wishing ‘to love and to be lovely’ – that is, to be well thought of or well regarded.

Smith proposed a theory of ‘mutual sympathy’, in which the act of observing others and seeing the judgements they form makes people see how others perceive their behaviour (and therefore become more aware of themselves). The feedback we receive from perceiving (or imagining) others’ judgements creates an incentive to achieve ‘mutual sympathy of sentiments’ that leads people to develop habits, and then principles, of behaviour which come to constitute their conscience.

This long excerpt is necessary because it runs so contrary to contemporary caricature's of Smith as a market fundamentalist believing in only in the 'invisible hand' as a source of value and values. There's far more to it, and as I argue in Ethics, Analytics and the Duty of Care, it is this concrete, practical and day-to-day moral sentiment that defined what is right, good and valuable in our own lives. It is only after we have run any proposal through this filter than we can speak of value as being emergent from the marketplace.

I have a lot more to read from Carney's 508 page volume, but I think we're off to a good start.

Content and Cognition 18 Feb 12:54 PM (last month)

In response to a post from Clark Quinn.

My view is that if you're still channeling Paul Kirschner you are at the very least endorsing an idea of cognition as a representational system, even if not a physical symbol system, in which there is a clear separation between 'content' and other (presumably incidental) cognitive activities. That to my mind would be enough to make you a cognitivist.

This isn't contrary to situationism. It can be true that our actions can inform our cognitive state, and still have a basis in both 'content' and (presumably incidental) non-content. Consider for example Wittgenstein inferring what a person 'knows' or 'believes' about the thickness of the ice as we walks across the frozen lake. And how we act can feed back into what we 'know' through the creation of contentful experiences.

I think that to be a non-cognitivist it is necessary to be a non-representationalist. What that means is a bit difficult to tease out (since, in principle, anything can be a representation of anything, if viewed in the right way). Minimally, though, to be a representationalist is to be able to describe functionally some significant property of a person that can be shared across physical instances without respect to the physical constitution of that property. In other words, for 'content', qua content, to have physical effects (ie., to influence thoughts, experiences and behaviours).

This is where the debate on consciousness comes in. We all (presumably) have consciousness. But what is it? Many (most?) theorists say that consciousness has to be consciousness *of* something (cf Descartes' cogito). So we can draw a separation between the 'content' of consciousness, and the experience (or 'qualia'). "There must be something more than the physical elements." But must there? In my view, consciousness is experience - that is, to be conscious is to have experiences. There's no distinction to be drawn between the two.

If you go sub-symbolic (which I think you do) then the 'representations' are patterns of neural activation. To be a cognitivist from that perspective becomes rather more difficult, as in requires holding that there are certain patterns of activation that are common across individuals (ie., you could see pattern P in both person A and person B) and where the *pattern* - and not the physical instantiation of the pattern - is causally relevant. I can't imagine such a thing, but I guess there are some constructivists that can.

In general - the cognitivist (ie., the non-reductionist) will always say there's something (usually formal) and non physical that constitutes (actual) cognition, and that it is this 'content' that is what we are trying to pass from person to person in education. Presented in bits and pieces it can sound convincing, but when we view the mechanism as a whole, it becomes (to my mind) implausible.

AI and Environmental Justice 12 Feb 2:21 PM (last month)

Responding to: Harnessing AI for Environmental Justice, by request. This originated as a Mastodon thread; I'll preserve the use of the informal 'you' throughout.

--

So, the document is addressed to activists and takes a "yes and" approach to their objections to AI, including in many cases where 'yes' isn't really the response I'd have, but I recognize the need to write to the audience.

I'm also personally less likely to use 'stories' to describe narrative framing, and more likely to use the language of 'frames' and 'setting context'. Again, though, I put this down to writing to your audience.

But I would save my main comment for the term that appears in the title, 'environmental justice'. The paper doesn't really grapple with the term until page 19, and this only in a well-placed call-out quote, specifically, " how might communities most adversely affected by climate impacts contribute to and shape conversations about the development of AI?"

And this leads to my major thought about the document as a whole: how do we ensure people are left out of AI?

I mean, when you get right down to it, AI is mathematics. And, it's more efficient to use a computer to do mathematics than to have a person do it. That's why NASA switched from human 'calculators' to computers.

This is the same for any other framing of AI. If you think of it as 'creativity', or 'content generation', or 'pattern recognition', it's going to be more efficient to use a computer than to employ a human.

That's why AI is a social justice issue.

So what do we mean, in this context, by environmental justice (which I take as closely related to social justice)? The paper posits: curiosity, transparency, accountability, diverse voices, sustainability, community, and intersectionality.

This is very much speaking to your audience, but I'm not seeing any theme or idea here. To put it in your terms: what is the story here? These are words they like to hear, but why are they here?

You could adopt a frame of 'justice as fairness' which asserts a narrative of non-harm and inclusion. But this classic of liberal ideology doesn't play well in this community.

Similarly, the utilitarian ethics,which underpins the twin ideals of beneficence and non maleficence, doesn't pay well with this audience.

Unfortunately, these are what generally tend to underline the 'consensus' on AI ethics (a false consensus, IMO, but still).

What's left? Some kind of Kantian-Marxist critical theoretical approach, or some kind of communitarian ethics-of-care approach.

Your paper takes a little from column A and a little from column B, setting up a class conflict between marginalized people and big tech, setting up a narrative of resistance, and that the same time drawing on ecotopian tropes of 'just enough', diversity, collaboration and intersectionality. Plus, from some third place, sustainability.

So, we come back to: AI is mathematics. And it might even (as I think) capture the mathematics behind cognition, sentience and consciousness (but of course you don't need to believe all that, AGI notwithstanding).

This to me leads to two threads of discussion:

1. Mathematics is undeniably good, but how much mathematics is good? What kind of mathematics is good?

2. The ethics of mathematics, captured in (1) above, and the ethics of other things.

I'll do both.

In fact, we can save a ton of time and resources through the use of mathematics.

But math isn't inherently good. Blockchain involves the wasteful use of mathematics just to make something difficult. Doing statistics just to support gambling preys on vulnerable.

And there is an inherent uncertainty involved in what we count, how we count, and who does the counting. I personality resist reframing everything in terms of 'value'. Justice does not equate to wealth,

And that leads to the second part: the contrast between a world defined by money, and everything else.

For example, why do we continue to use fossil fuels? Because it's cheaper, and industry doesn't care about anything else.

This debate has almost nothing to do with AI. I mean, maybe AI can make electrical grids more efficient, or nuclear plants safer, but it isn't at the core of the debate.

Big tech and AI are not synonymous.

Imagine someone said, when discussing math, that "resistance and refusal are important pillars." It makes no real sense.

I can see the sense of resisting oligarchy, authoritarianism, and inequality. But it doesn't follow that we should resist AI because they use AI. We should resist *their ownership* of AI.

If you take math, and apply it to everything we do, that's AI. It's all of ours to use toward, not against, a world that works for all of us.

Patterns, Facts, and AI 1 Feb 4:36 AM (2 months ago)

Replying to this thread.

I think if you scan through a hundred papers and find a pattern in those papers, and use that pattern to make something, you haven't copied anything.

Harder to do: you can also scan through a hundred papers, find the one original thing, and use that to make something. Then the question of whether you've copied is a matter of degree.

In neither case does it matter whether a machine or a human does this. What makes something ethical or not is the act, not the technology.

Anyhow. I scan though a hundred papers (more or less) every day to produce my newsletter. I try to find the original and highlight that. And my own original work is based on patterns I see in the data.

I passed over Doug's article because (in my view) it had been done before (not the least of all, by me, in 2019). There's no ethical issue or blame here; most of what is produced in the world (including most of what I produce) is not original.

Again, it's not the tech.

I think the hardest of all to produce is something original, based on a pattern no one has seen, that is useful to people.

I think that if a machine did that, there would be no real issue with the fact that it was a machine that did that, because we'd all be too busy trying to take advantage of this new knowledge.

But it's hard for a machine to find a new pattern, because there's so much pattern recognition already in human discourse. Useful is also really hard.

Certain patterns (eg., 'AI copies') get reified until they become 'fact'.

As @poritzj says, it's the data used to find the patterns that matters, for all sorts of statistical reasons. But who among the human pundits is honest about the complete corpus of material they draw upon?

If your data is bad - if it's not diverse, if it's not informed, if it's propaganda - then your pattern recognition is bad, and you reify the wrong things into facts the promotion of which is actually harmful.

That's my main criticism of most anti-AI writers: that their data is bad. They draw from popular and commercial press, or from (say) commercial publishers with a vested interest.

How to 'Like' a Post in Bluesky using Javascript 15 Nov 2024 1:57 PM (4 months ago)

The Problem

If you look at the API reference for Bluesky to 'like' a post, you'll find it isn't there.

If you use the NPM repository for the AT protocol you can 'like' a post with this simple command:

await agent.like(uri,cid)

This works - but you have to have installed and loaded the entire module to make it work, and then you're in module hell with versions and upgrades and the rest.

If you're ChatGPT you expect there's a simple API function, like this:

await fetch('https://bsky.social/xrpc/app.bsky.feed.favoritePost',{...})

but there isn't one.

What's key about Bluesky is that it separates the application layer and the data layer. This matters a lot because it changes how we need to think about doing things like giving a post a 'like'. It's not simply a matter of performing a function. It's a matter of creating a record in a repository. Which repository? My repository.

My repository is currently hosted on Bluesky, but I can imagine that changing. The application I'm using is also hosted by Bluesky, but that could change as well. So we need to be smart about how we describe the content we're liking and where we're storing the record.

The Record

I first create the record I want to store. Like this:

const record = {

"$type": "app.bsky.feed.like",

createdAt: new Date().toISOString(),

subject: {

uri: uri,

py_type: 'com.atproto.repo.strongRef',

cid: cid

}

}

In this record, I define the 'type' and a Bluesky 'like' record (and yeah, we could have any number of types). The 'subject' of this record is a specific post, which I identify as a 'stongRef' using both the 'uri' and the 'cid'.

The uri is the post's location as defined by the atmosphere (AT) protocol. It looks like this:

at://did:plc:v33us6ae36e4zl2qqijueffi/app.bsky.feed.post/3l576cazyjq2x The atmosphere address points to the repository where the post is located. The repository belongs to an individual who is identified by a DID - a distributed identifier. The repository is a 'post' repository, and the specific item has an identifier '3l576cazyjq2x'.

We can convert the AT address into a traditional web URL. The DID is associated with a 'handle', for example, 'guillecoru.bsky.social'. We can use the bsky.app to get that post:

https://bsky.app/profile/guillecoru.bsky.social/post/3l576cazyjq2x

The cid is the post's content-based address. It looks like this:

bafyreiccpgycnovrqguhbqgthtqbpfiyvhk52svq33uox65ojqz6qqewi4 The content ID (cid) was created by taking the content of the post and running it through a hash algorithm. You can read about the process here. I've written about it before.

The Save

Now I want to save my record to Bluesky. For this I will use a fetch() command. Here's what it looks like:

const response = await fetch('https://bsky.social/xrpc/com.atproto.repo.createRecord', {

method: 'POST',

headers: {

'Authorization': `Bearer ${accessToken}`,

'Content-Type': 'application/json',

},

body: JSON.stringify({

collection: 'app.bsky.feed.like',

repo: did,

record: record

}),

});

Fetch is making an API request to post a new record.

The headers contain my previously obtained access Token. I obtain this when I create a session by logging in to Bluesky. It's a long string of characters.

The body of my request specifies the repository I want to add the record to. This will be my repository, identified by my DID, which I've stored in a variable called 'did'. I obtained this from Bluesky when I logged in. The body also specifies the collection I want the record to belong to, and of course contains the record itself.

That's it! In the full function I do some error checking just to make sure everything went OK.

P.S.

This isn't the complete script. I just want to focus in this post on how to 'like' a post. You would still of course have to write the login script and get the post information. Those are exercises for another day.

Assessment of Barriers to Educational Technology Acceptance 27 Oct 2024 2:32 AM (5 months ago)

So, Uh, a lot of this paper wasn't actually in the paper.

It's part of a project that we did in the National Research Council with an organization called DRDC

And much of the presentation wasn't actually available to be shown at the time the paper was submitted but now it is available.

Our Publications are available. In these slides, I will show a QR code at the end of the presentation where the slides are available and you'll be able to access them. The first of two presentations is the one that this one is focused on. The analysis of barriers to technology adoption.

They're using surveys. Um, How do we know these surveys actually measure anything of value? Well, that leads us to the subject of validity and reliability.

Coming Together 23 Oct 2024 1:37 PM (5 months ago)

When I first started in this business of working online some thirty years ago I was somewhat surprised to learn that it would involve more, not less, travel. And so here I sit on a red-eye to Morocco, not for the first time, to immerse myself in a world where the call to prayer happens five times daily, where I will give my talk in French, where, maybe, I will be able to buy a fez in Fes.

It's not my world as I imagined it when I was young. Oh sure, I had a wanderlust from an early age, but I really had no idea of what to expect when I got to wherever I was going. How could I, an average boy being raised in a rural community in eastern Ontario, Canada. Even if I did read the news every day - and I did, before I delivered the paper that day - it would not and could not prepare me to see the world.

And it's not an easy world to travel through. There have always been wars, but they seem to be more intense today, with the stakes being greater. There has always been differences of opinion but it feels today that we're too quick to jump toward hate. There have always been rumours and conspiracy theories, but today they're funded by state actors and spread at the speed of the internet.

And that leads me to the main point I want to talk about: the idea that we are fracturing into different factions, each with their own version of the truth, drifting toward a world where there are no facts, where nothing can be known for sure, where there are no foundations, no bases for ethics and morality, no common conception of the good, just individual tribes each fighting for a share of fewer and fewer resources on a stressed planet.

And I'm here to say that it isn't so.

No, that does not mean that I've here to announce some common foundation or shared truth that we must all believe and that can form the basis for a future society, as so many others before me have. I doubt that such a foundation exists, nor would it be universally accepted even if it did. Someone could say "here is a hand" and there will always be someone who, with good reason, will object. Ceci n'est pas une pipe. It's all wordplay.

No, what I want to question is the idea that we're fracturing. I want to question the idea that there was once a common conception of The Way The World Is, that there were trusted sources on which we could all rely, that we knew what truth is, as plainly as we know the back of our hands.

Look at that hand, maybe. If it young or old? Is it smooth or scarred? What colour is it? Do you remember all the spots you are now looking at? Were they all here last month? That large spot - is it the same size it was a year ago? I am describing my hand, of course. What is it about your hand I couldn't imagine.

You see, the dominant narrative is that we were all once one society, but now we're drifting apart. But that has never been the case. We have never been one society. Not even those of us who were living together in a small eastern Ontario village.

We were just far away enough from Ottawa that the newly improved highway meant people could live in the country and commute to the city. That's what my father did, to his job at Bell. We were a city family, and lived in a different world from those around us who made their living on the farm. There was just enough farmer in us - my mother grew up on a farm - that we could make it work.

As a child my community was defined by its edges. When we lived in a Montreal suburb, we never spoke to the 'Frenchies', who lived next door. When we lived in Metcalfe, it was a clash of expectations; I was led to value reading, good grades, chess and public speaking, but the only currency of value in a rough and tumble farming community was achievement in sports.

We - the different communities - could and did live apart. There was virtually no overlap. It was an incredibly rare and valuable person - a Ralph James - who could transcend these boundaries. Last I heard, he was working at the gas station, which of course the centre of the community.

It's not the same today. Digital technology in general and social media in particular are bringing communities together whether we want them to or not. It's called 'context collapse'. As originally envisioned, it means something like the idea that your work, family and friends, three separate contexts, are now collapsing into a single space. You see them all in the same environment - on Twitter perhaps, or Facebook, or Reddit, or Insta. That joke that would kill with your friends horrifies your parents and might get you fired at work.

If it were only that, it might be OK. But there's the aforementioned fracturing of society to consider as well. When I was growing up there were many types of people I never saw: black people, gay people, Muslims, indigenous people. I learned about them, a bit, but what I read in the news and in the library didn't really reflect the reality once I met it.

In many ways I was lucky. My love of learning led me to finish school in the community, to read people like Francis Moore Lappe while still a teen, to move across the continent to find work, to go to university, to meet gay people and Marxists and hippies, to travel to indigenous communities, and eventually, to meet with and work with and visit people around the world. It is a truly rare experience and I am incredibly grateful for having had the opportunity.

And today, having had such experiences, I find it laughable that I ever worried about how I dressed, how I looked, how I seemed to other people. The things that I thought were so important, especially when I was young, now seem so trivial in this wider world. It was as Laoze said, that all these things, these definitions of what is and what isn't, what's right and what's wrong, what is valued and what is worthless, are all artifices we create, and not part of some underlying 'reality', whatever that is.

But if all this is true (I hear you ask) then how could the dominant narrative be false? We live in a world now where instead of being one society, people are quoting Laoze and creating their own facts, their own truths, and even inventing their own sense of right and wrong. How can our society survive if we can't even talk to each other.

I'm here to say, our society isn't fracturing; it was always fractured, always divided into myriad subsocieties. True, it never felt divided. But that was because these others were beyond our view. They were there, but we literally did not see them, literally did not know that they existed, and even if we had any inkling, we didn't want to know.

It's like when I was a kid. It's not like black people gays, and Indigenous nations didn't exist. But they existed in my consciousness, if they existed at all, only as fables, only as an artifice created by news media and television and even the books I read and classes I took.

As I grew older, as I read more, as I traveled more, it was like a mist separating me from them began to lift, and I could see - at least partially - into their communities.

And this same thing is what is happening on a global scale. We are not fracturing, we are growing closer together. People and cultures and societies who were never a part of our daily existence and now right in front of us, unavoidable. A gay person is gay, and you have to look. A Muslim references Allah, and you have to listen. Someone you met online talks about Tang poetry, and you have the whole library to your fingertips (with Google to translate it for you).

It's no surprise that people want the fog to come back down, so that things will be the way they were. But nothing gets better that way. The old prejudices return, the old myths prevail. Some cultures are repressed, other cultures are exploited, and others threaten the well-being of an entire planetary community.

We will never be united, never share a single common language or world view or way of life, but at least now we can see that each other exists. We're not required to agree with them, to share their values, or to believe what they believe. But what we can do, at least, is talk with each other - to see them as less of the 'other' and more like different versions of ourselves.

People talk of this being the atomic age, the space age, the information age - but in reality, it is the age of the great coming together. All the possibilities of human existence are laid out before us like some great smorgasbord. It can be hard, challenging, frustrating, but is, for those of us who choose it, rewarding and enlightening.

Where we were apart, we are now together, a dense mesh of possibility.

Automated Translation in Javascript with Google Translate API 12 Oct 2024 5:09 PM (5 months ago)

The main thing to know here is that Google's AI does not know how Google's API interface works. It will give you advice that works fine with GET requests but translating anything fancy requires POST, which Google's AI doesn't understand. That's what I spent most of my afternoon discovering.

The rest of my afternoon was spent figuring out how it actually works, though. There are two major steps: first, setting up access to the translation API, and second, actually coding the Javascript. The second step is the easier step.

Setting Up Access

Note that I have a Google account and therefore access to the Google console. You'll need to set this up with Google Cloud. There may be costs involved; I have a business account which I pay for but I assume this would still work with a personal account. If you need to pay money anywhere I'm sure Google will tell you.

1. Create a Project in Google Cloud

- Open your web browser and go to:https://console.cloud.google.com/

- Create a new project - use the 'select a project' dropdown (upper left) or go to: https://console.cloud.google.com/projectcreate

- This should take you to your project page. Take note of your project ID.

2. Enable 'Cloud Translation' in your project

- On your project page, click 'APIs and Services'

- Search for "Cloud Translation" in the search bar and select the "Cloud Translation API" from the results.

- Click the "Enable" button to enable the Cloud Translation API for your project

3. Create an apiKey in your project

- On your project page, click 'Credentials' in the left-hand menu

- Click 'Create Credentials' (near the top of the page)

- Select 'API keys' from the dropdown

- Copy the key; you'll never see it on Google again

Coding the Javascript

The trick here is that you're using async functions with fetch requests. This forces Javascript to wait for a reply from the translation engine.

1. The input form

<input type="text" id="inputText" placeholder="Enter text to translate">

<input type="text" id="inputProject" placeholder="P{roject ID}">